Build or Buy Geospatial Data: A Rational Choice Is Needed

While a growing number of organizations in a diverse range of industries (finance, insurance, retail, real estate, etc.) are increasingly turning to geospatial data for their business needs, purchasing the right data can be a challenging process. There are two factors that contribute to the complexity of geospatial data. On the one hand, geospatial data is always subject to a certain degree of uncertainty, as it is the reflection of a complex and often incomplete sociospatial environment. On the other hand, the diversity of ways in which this data is produced (networks of sensors, drones, satellites, geocoding, crowdsourcing, etc.) places a limit on the traceability and the genealogical characterization of a data set at a given time. Given the inherent complexity noted above, there are some key considerations that must be considered when purchasing data.

Commercial Off-The-Shelf Data vs. Custom Data Sets

For many years, the only possible solution was to contact a specialized company in the geospatial industry that would collect and produce a custom data product. Today, the market for data that are ready-to-use (or nearly) is mature and provides a wide range of options. This is the case for raster data (airborne or satellite imagery, orthophotos, and 3D Lidar models) and also, increasingly, for vector databases. These databases, released by national or regional mapping agencies, such as StatCan data, are mostly open and ostensibly “free of cost.” There is also value-added data available from private companies such as DMTI, Foursquare, HERE Technologies, Precisely, and Google. This near-complete shift in the geospatial data market has blurred the lines and led to a false sense of things being free and easy to integrate. However, geospatial data is expensive to produce (fleets of vehicles, airborne sensors, etc.), process, and disseminate, whether it is open and free of costs or not. It is also complicated to integrate.

The decision to purchase data generally takes place within a specific economic and financial context. A number of studies have attempted to assess the economic impact of geospatial data, proposing models for measuring return on investment, avoided costs, and the value chain. Commercial off-the-shelf (COTS) data has the advantage of having a clearly specified cost and dedicated features that come with the already integrated spatial operators. However, the predetermined framework may, in some cases, be a drawback. The calculation of the total cost must take into account the base price, as well as the cost of integration, aggregation, analysis, and processing, in addition to the costs of services, maintenance, and updating. Thus, the number of open data portals that now exist does not mean there are no costs. Data always have costs. Data that are ostensibly free may, in the end, require processing costs that are higher and more difficult to anticipate. It’s food for thought…

Internal and External Quality: The Relative Interest of Metadata

All geospatial data come with uncertainty and errors associated with collection, modeling, geometric, semantic, or temporal matching, integration, and processing. A data set can therefore be more or less adequate. Managing the uncertainty associated with data therefore implies the need to evaluate its level of reliability, accuracy, and precision in relation to the situation it represents. The issue of data quality is thus at the core of these considerations. The intrinsic characteristics of the data (internal quality), measured using the criteria defined in the ISO 19113 standard (geometric precision, completeness, semantic precision, logical coherence, timeliness, etc.), are used to determine the level of pertinence (external quality) of a data set to a particular use and, if necessary, the type of processing required before its use. The external quality of the data, namely its suitability for use, must be able to answer a simple question: what are the business needs and to what extent does the data set meet these needs? Metadata (data about the data), when complete, up-to-date, and accessible, is also a good indicator of internal quality and a means of assessing the external quality of the data.

Anticipating Risks and Responsibilities

Given that geospatial data are essentially observational data (and therefore subject to uncertainty), a representation of the world (and therefore always incomplete), rarely up to date (and therefore partly obsolete), and often complex and technical for non-experts, it should always come with warnings, and terms and conditions. This is even more important considering that the methods and conditions used to produce geospatial data have become increasingly decentralized, but also that this data is produced and sold to be reused, modified, integrated, and used in different ways. Geospatial data is essentially a common good.

Following emerging regulatory frameworks, jurisprudence, and even, for Quebec, the Civil Code, the data producer’s obligations (and by extension, those of the data integrator) are numerous: they must consider the type of data and its foreseeable uses, state their reservations about the quality of the data, specify the level of uncertainty associated with it, and prevent this uncertainty by identifying and naming the risks. For this reason, the purchase of geospatial data should always be accompanied by a serious reflection on the possible uses of the data set, the risks of any inappropriate use, potential controversies related to the interpretation of the data, and the emerging legal considerations. There is an ever-increasing number of applicable regulatory frameworks that vary in scope, and which are in some cases extraterritorial, to clarify the legal obligations involved with these data sets. The General Data Protection Regulation (GDPR), for example, establishes rules for the protection of individual personal data, including geolocation. The ISO 27001 and 27701 standards provide an excellent framework for the application of the GDPR with respect to basic data security management measures (cybersecurity) and more specifically the protection of private data.

Considering the foregoing, the choice of geospatial data must not be taken lightly, even if, at first glance, purchasing data may appear simple. Mistakes can end up being very costly. Accordingly, prior to any purchase, a review of the available data, an analysis of the compatibility of these data sets with the intended uses, and the implementation of a rigorous selection process should be carried out.

One Possible Approach to Reducing Complexity by Leveraging Geospatial Expertise

1. Conduct a Compatibility Analysis

Although this list is certainly not exhaustive, it is important that the data sets that are identified during the pre-purchase review be analyzed with respect to the internal quality characteristics of:

- the geospatial frame of reference: positioning, shape, neighborhood relationships, spatial dimension (0D, 1D, 2D), granularity and minimum dimension, accuracy, reference system, data structure (raster/vector), etc.;

- the semantic (thematic) frame of reference: meaning of the data (identification and description of attributes and their values);

- the temporal frame of reference: date, duration, and validity of the data;

- the collection and processing methods: measurement, collection, and storage tools; the analytical tools used to structure, process, and disseminate the data; the format, coding, structure, and processing;

- as well as the recommended uses: type of use or modification permitted by the data – prohibition of sale, modification, terms and conditions, etc.

2. Selecting a Data Set

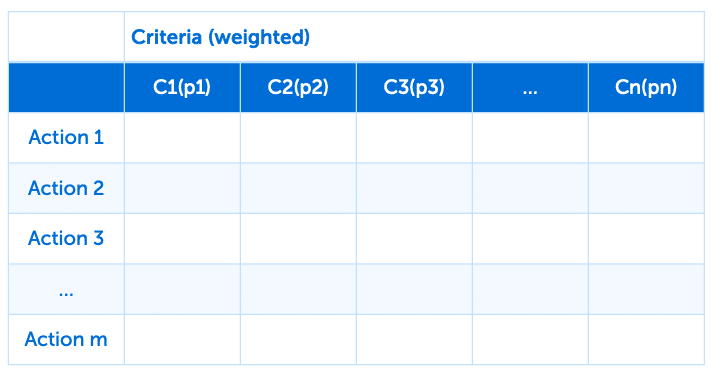

Once the compatibility analysis is done, the choice of data set(s) involves a selection process that may, for example, be based on a multi-criteria analysis framework. This is, of course, one of many qualitative and quantitative analysis methodologies available. The choice of method will often depend on the organization’s internal processes, its maturity in data literacy (data acumen), or whether the organization has employed a geospatial expert. One method is to list the business needs to be addressed by the data and the criteria (strict, weighted, indifferent) to be considered. Once the criteria have been defined, which is a complex process in and of itself, the next step is to create a matrix of the performance of each data set and to aggregate this performance in order to rank the data sets in terms of their external quality.

3. Creating a Selection Matrix

The results can be summarized in a selection matrix (e.g., network cost analysis, comparison of the customer base between 2000, 2010, and 2020). The columns represent the criteria (e.g., presence of traffic constraints on the network, date of data set update) and the values in the matrix correspond to the preference of each action with respect to each criterion (network characteristic: for example, weighting based on degree of effort required to carry out required processing, date: yes/no). The thresholds of preference or indifference are indicated, as are the relative weights used to weight each of the criteria, if needed.

Selection matrix (sample)

Selection matrix (sample)

Conclusion

Buying geospatial data is no minor task, starting with the knowledge needed to carry out a survey of potential data products. The level of expertise required to analyze, process, and choose the data that will give the organization the product best suited to its needs (the best external quality) has grown as the market and the options have become more complex. The geospatial expert’s role is to assist organizations with the data acquisition process to ensure the data meets their needs, and thus align the purchasing strategy with the organization’s mission and its business model.