Navigating Cloud Migration Challenges for Data Warehousing

April 11, 2023

With cloud computing offering tremendous opportunities to break down data silos, it’s no surprise that companies are increasingly migrating their on-premises databases to cloud data warehouses. But not all companies are at the same stage in their journey to the cloud. On top of the time it takes to migrate entire datasets to these new systems, the actual capabilities (or lack thereof) of these warehouses can cause a lot of friction across departments.

A shift in behavior is also required from the various stakeholders (whether they’re GIS, Data Science, or IT teams) for them to transition from ETL (extract, transform, load) to ELT (extract, load, transform) mindsets in order to take advantage of cloud scalability, as well as data governance and security concepts.

Your company has probably already opted for a warehousing solution, but do you know the extent of its geospatial capabilities? And is it really the best solution for your needs?

Korem is here to help your teams in their geo-enablement, demystify the various cloud options available on the market, and overcome your on premise to cloud migration challenges.

Not All Cloud Data Warehousing Solutions Are Created Equal

It is not uncommon to find gaps in knowledge regarding the geospatial capabilities of the chosen or available cloud application, especially if your company is lacking in cloud talents or is well accustomed to its former on-premises warehouse.

Most data warehousing solutions come with common geospatial capabilities that will address basic use cases. But the true power of these solutions comes in the form of user-defined functions, which will help stakeholders augment their system’s capabilities with custom logic and advanced geospatial operators they may be accustomed to.

Broadly speaking, user-defined functions are computed either internally or externally.

Internal Operations

For internal operations, the data is in a closed circuit and computations leverage data warehouses’ own scaling capability. Thus, the data never leaves the premises of the cloud data warehouse. They also benefit from simple deployment types that range from a one-click installation to a step-by-step configuration.

A wide variety of user-defined functions allow both basic geospatial functions and advanced geospatial functions, including transformations, measurements, and clustering. Other possible functions include:

- Binning (H3, quadbin, S2)

- Tiling (vector tiles)

- Geocoding

- Address validation

- Routing

- Raster analytics

External Operations

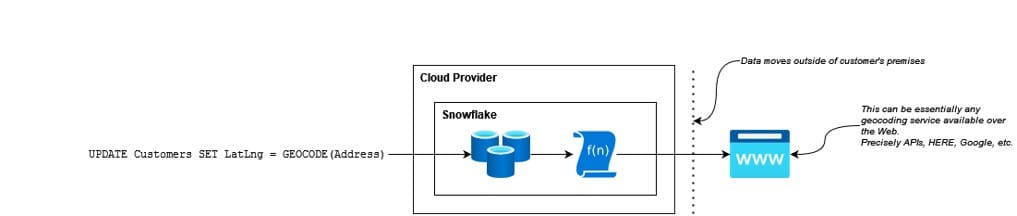

Depending on the needs, not everything can be computed inside the data warehouse. This can be caused by file systems limitations, programming language barriers, and/or complex interactions with custom dictionaries. You might need, for example, to leverage an external API to achieve your goals.

For these external operations, the data leaves the premises to hit an outside source. The computations, therefore, run on an external web service. Since scalability is managed externally, this can pose performance and cost concerns, as latency and ingress/egress fees can often lead to surprises down the line.

Just like internal operations, external operations benefit from simple deployment types as you can easily leverage existing Software as a Service (SaaS) solutions. However, depending on the sensitivity of the data, moving data in and out of the data warehouse might not be a preferred solution.

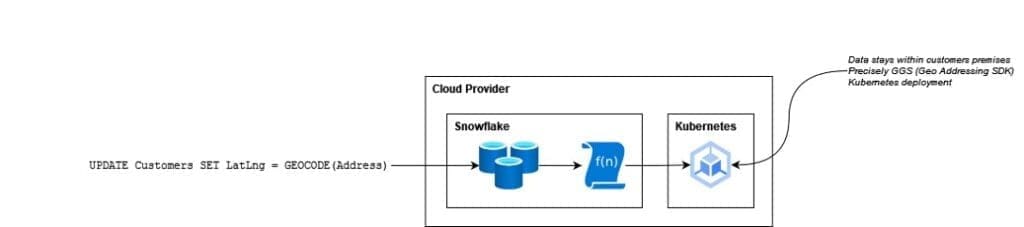

Fortunately, a third possibility exists, which is essentially a combination of both. Functions still call for an external web service, but this time, the web service is hosted on your premises (public or private cloud). This allows very secure operations, lower latency, and eliminates ingress/egress fees.

However, this option, which involves more complex deployments, such as highly scalable and resilient Kubernetes deployments, requires much more technical expertise.

Challenges of Data Warehouse Migration to Cloud

Data Integration Process

Migrating data into a cloud-based solution involves significant effort to consolidate and synchronize different formats and types of data with multiple purposes and from various sources and applications, such as customer relationship management systems and enterprise resource planning.

It also involves moving from an ETL to an ELT process. As data transformation is performed after loading the data into the target system in ELT, this can lead to a complex data transformation process. This makes it more challenging to analyze and transform a high volume of data as it is already loaded. Thus, you must ensure that you have chosen a robust and scalable infrastructure to handle large data volumes.

But big data is not the only consideration. A lack of data quality can also substantially impact the success of the migration process, as it requires considerable effort and investment in data cleaning and data enriching. Again, since data is loaded before transformation in ELT, any data quality issues can be detected only after the data has been loaded. Therefore, data quality checks should be implemented at different stages of the ELT process to avoid errors.

Resource and Cost Management

Cloud-based solutions are generally more cost effective than traditional infrastructure, due to reduced hardware and maintenance costs, as well as the ability to pay only for the resources that are being used. However, the data migration process can be more costly than expected in terms of time and money when it is not based on a solid strategy.

Indeed, inadequate research and planning, for example about the cloud provider and its data policies, can result in unexpected bills or fees. Ideally, the whole process should be completed in multiple stages, with extensive testing and validation between each of these stages. This requires significant technical expertise and investment in new skills for loading large amounts of data into the target system.

Unfortunately, companies often lack the expertise and resources necessary to fully exploit new technologies autonomously. This is where Korem’s managed services come in handy, as it would allow you to outsource the management of your operations to our team of highly qualified experts.

Data Security and Privacy

Cloud providers typically implement various security measures, such as encryption, role-based access controls, and regular audits. This ensures optimal data protection, privacy, and compliance with regulatory requirements, and protects customer data and infrastructure from cyber-attacks and other threats. Moreover, cloud services often offer built-in disaster recovery solutions, allowing organizations to quickly recover their data and applications in the event of an outage.

However, you must ensure that the security protocols provided by your chosen cloud provider meet your data security and privacy requirements. This is even more true for public cloud deployments, where an organization shares servers and infrastructures with other cloud clients. In this context, server vulnerabilities could lead to data leaks or other security incidents.

In addition, you may not have visibility into where your data and applications are actually hosted in public cloud deployments, which can be problematic for certain data privacy laws such as the GDPR. For example, personally identifiable information (PII), personal data, or sensitive data will likely not be authorized for transit to external data platforms.

According to a SANS survey, 56% of respondents are incredibly bothered about security as one of the major cloud migration challenges. Hence, data governance becomes essential to implement stringent security measures to ensure that data is secure, organized, and managed effectively, and that each user has access to the right level of data.

Fortunately, through our contract management offer and licensing compliance support, we can help you better understand the terms and conditions (T&Cs) associated with each dataset and, if necessary, negotiate new T&Cs with your chosen provider.

Data Visualization Tools

Lastly, if you want to take full advantage of your data to answer business questions and solve geospatial problems, you must ensure that you choose the right visualization tool. Since visualizing massive amounts of data requires specialized tools and methodologies, your previous visualization solution may no longer be suitable for your needs.

For example, with the CARTO Platform, you can quickly create intuitive location intelligence applications or use an application template to accelerate your geospatial analysis. It is also compatible with the leading cloud data platforms and analytics tools, such as Google BigQuery, Snowflake, Amazon Redshift, and Databricks. Learn more about geospatial technology taking on cloud-native platforms.

Is Your Cloud Solution Right for You?

When properly leveraged, cloud solutions can reduce costs, improve data accuracy and security, offer scalability, and provide business insights for data-driven decisions. But before choosing a cloud data warehouse, you should always ask yourself these few questions:

- What is my cloud vision?

- What are my main spatial challenges (analytics, geocoding, routing, etc.)?

- How would I describe my datasets (PII, sensitive, etc.)?

- What are my expectations in terms of volume, response time, and uptime?

- Would I describe my workload as a batch process, real-time, or a mix of both?

- Am I already leveraging geospatial solutions? If so, what do they accomplish and where are they hosted?

- How can the integration of geospatial data into a cloud strategy can be a game changer if I am in the utilities space?

If you’re not sure if your cloud data platform is right for you or if you haven’t made your mind up yet, Korem has the expertise and products to help you choose the best option according to your needs and to assist you with the complex cloud migration challenges.